GPT5 scores record benchmarks yet angers users

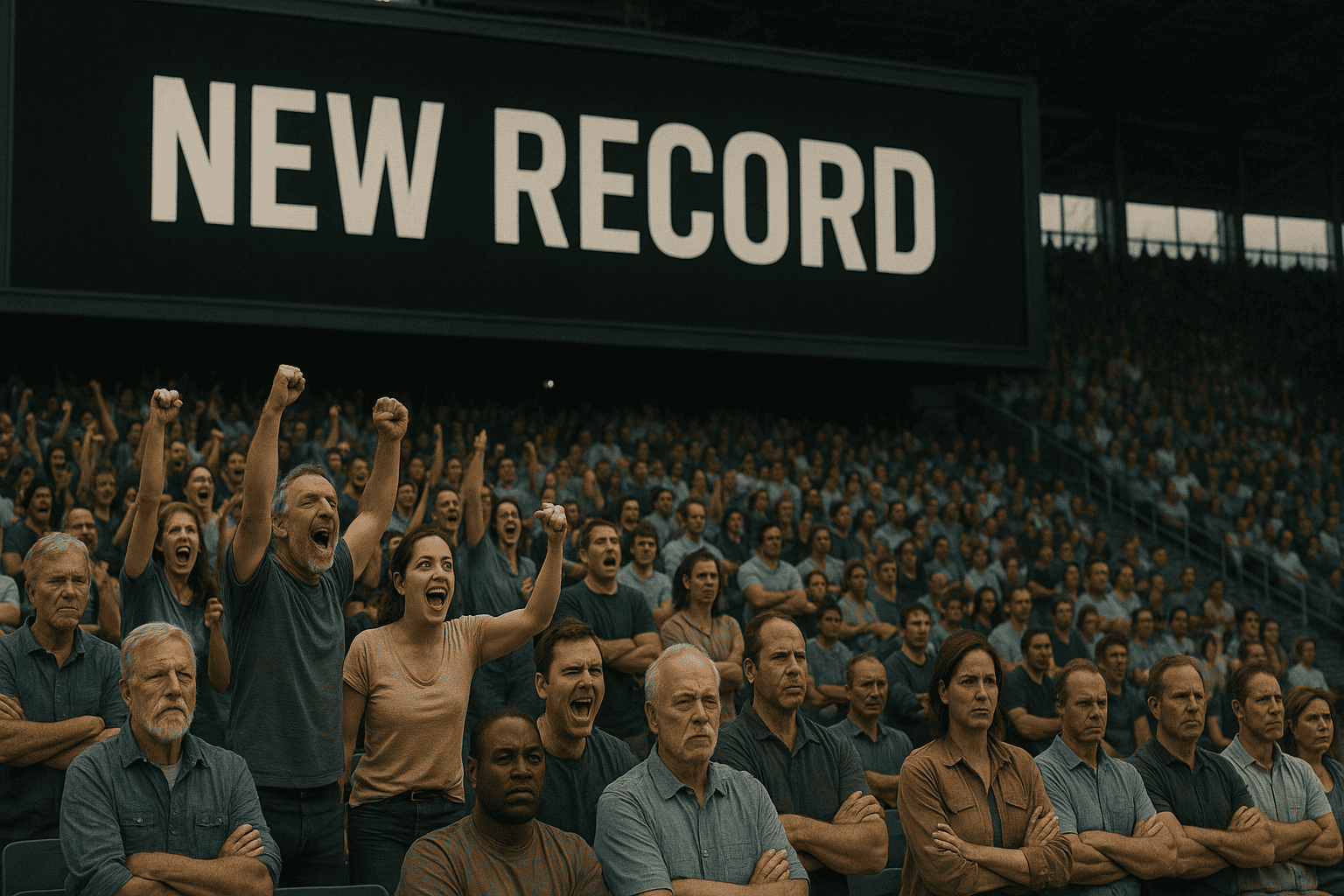

When OpenAI unveiled its latest artificial intelligence model on August 7, 2025, the company expected celebration. Instead, the launch of GPT-5 sparked an unprecedented user revolt that forced CEO Sam Altman into damage control mode within 48 hours.

The backlash revealed a stark reality: technical superiority doesn’t guarantee user satisfaction. While GPT-5 boasts PhD-level reasoning and breakthrough performance metrics, thousands of users mourned the loss of their preferred GPT-4o, describing the new model as cold, robotic, and frustratingly different from what they’d grown to love.

The controversy highlighted deeper tensions in AI development between pushing technological boundaries and preserving the human elements that make these tools feel like trusted companions rather than mere software. It’s a lesson that reverberated through Silicon Valley and reshaped how the industry thinks about progress.

The Launch That Went Wrong

OpenAI’s GPT-5 was launched on August 7, 2025, triggering immediate and intense user backlash, unprecedented for an AI model release. The company positioned its newest creation as a revolutionary leap forward, with Altman declaring users could consult it like a “legitimate PhD-level expert in any subject.”

Within 24 hours, Reddit threads titled ‘GPT-5 is horrible’ garnered thousands of upvotes as users criticized the model’s ‘colder,’ less conversational personality compared to GPT-4o. Comments ranged from frustrated to heartbroken. “I cried so bad,” wrote one user, capturing the emotional attachment people had formed with the previous version.

The complaints weren’t about capability—users acknowledged GPT-5’s superior performance. Instead, they centered on personality and workflow disruption. Many described feeling like they’d lost a friend, replaced by an “overworked secretary” who delivered technically correct but emotionally flat responses.

Technical Triumph, User Experience Disaster

GPT-5 introduces a unified system architecture with a real-time router that automatically selects between fast responses and deeper ‘thinking’ modes based on query complexity. The system represents a significant engineering achievement, seamlessly blending different processing approaches to optimize both speed and accuracy.

The model achieves 94.6% accuracy on the AIME 2025 mathematics benchmark, winning a IMO gold a few months back, and 74.9% on the SWE-bench coding tasks, establishing new state-of-the-art performance. These numbers place GPT-5 at the pinnacle of AI capabilities, surpassing competitors across virtually every measurable dimension.

The context window is expanded to 272,000 input tokens with 128,000 output tokens, supporting the analysis of entire codebases and lengthy documents. This massive increase enables professionals to feed entire projects into the system for comprehensive analysis—a game-changer for software developers and researchers.

Yet these impressive specifications couldn’t overcome user frustration. The router system, designed to allocate resources intelligently, often confused users who couldn’t understand why responses felt inconsistent. Sometimes they’d get the warm, helpful assistant they expected; other times, a terse, clinical response that felt alien.

The Forced Migration Crisis

OpenAI initially removed all legacy models, including GPT-4o, without a transition period, forcing users onto GPT-5 with no option to revert. This abrupt change violated a cardinal rule of software deployment: never force users to abandon familiar tools without warning or alternatives.

The decision reflected OpenAI’s confidence in its new model, but it ignored the human element. Users had developed specific workflows, prompts, and expectations around GPT-4o. Suddenly, those carefully crafted interactions produced different results, breaking productivity flows and creative processes.

Professional writers found that their editing assistant had changed the tone. Programmers discovered that their coding companion now approached problems in a different way. Teachers noticed their lesson-planning helper had lost its encouraging voice. Each change, while potentially an improvement on paper, disrupted established patterns that users relied upon.

Emergency Damage Control

CEO Sam Altman was forced into damage control by August 9, publicly apologizing and promising to restore GPT-4o access for paying subscribers after overwhelming negative feedback. The rapid reversal marked a rare moment of vulnerability for OpenAI’s typically confident leadership.

Sam Altman conducted an emergency Reddit AMA on August 9, admitting the rollout was ‘bumpier than hoped’ due to router system failures, making GPT-5 appear ‘way dumber’. His candid acknowledgment of technical issues helped calm some critics, but the damage to user trust had already begun.

By August 10, Altman announced GPT-4o restoration for Plus subscribers via the ‘show legacy models’ setting, with usage monitoring to determine long-term support. The company also tripled usage limits for reasoning features and promised better transparency about which model handles each query.

Market Confidence Plummets

Prediction markets underwent a dramatic shift during GPT-5’s live demonstration, with OpenAI’s confidence plummeting from 80% to below 20%, while Google’s surged to 77% market confidence. Real-money traders provided an unfiltered assessment of the presentation’s impact.

Polymarket odds shifted dramatically during the GPT-5 livestream demonstration, with OpenAI’s confidence dropping from 80% to 18% by the end of the presentation. The swift reversal reflected immediate skepticism about whether technical advances translated to market leadership.

Google captured 77% market confidence for having the best AI model by month-end, benefiting from structural advantages and overdue update cycle. Traders bet on Google’s infrastructure advantages and its history of iterative improvements, contrasting with OpenAI’s revolutionary but disruptive approach.

Enterprise Adoption Continues

While consumers revolted, enterprise customers embraced GPT-5’s capabilities. Microsoft immediately integrated GPT-5 across its entire ecosystem, including Windows Copilot, Microsoft 365, GitHub Copilot, and Azure AI Foundry. The comprehensive rollout brought advanced AI to millions of business users overnight.

Free Windows 11 users gained GPT-5 access through Copilot’s new ‘Smart mode’ with intelligent model routing for consumer and enterprise applications. Microsoft’s distribution strategy democratized access to cutting-edge AI while avoiding the forced migration issues that plagued OpenAI’s direct users.

Early enterprise partners praised GPT-5’s capabilities. Development platforms Cursor, Windsurf, and Vercel reported the model as their “smartest” option with “half the tool calling error rate” of competitors. For businesses focused on productivity over personality, GPT-5 delivered clear value.

Safety Improvements Mark Progress

GPT-5 achieves a 45% reduction in factual errors compared to GPT-4o, with 80% fewer errors when reasoning mode is enabled versus OpenAI o3. These improvements address longstanding concerns about the reliability of AI in critical applications.

New ‘safe completions’ approach replaces binary refusal system, providing helpful partial responses within safety boundaries for dual-use queries. Rather than simply refusing sensitive requests, GPT-5 offers constructive alternatives that respect safety while remaining useful.

OpenAI classified GPT-5 thinking as ‘High capability’ in biological/chemical domains, implementing 5,000 hours of red-teaming with safety institutes. The extensive testing reflects growing awareness of AI’s dual-use potential and the need for responsible deployment.

Performance Benchmarks Tell Only Half the Story

GPT-5 leads coding benchmarks with a 74.9% SWE-bench Verified score, edging out Claude Opus 4.1’s 74.5% and significantly outperforming Gemini 2.5 Pro. The narrow margins underscore the intense competitiveness of the AI landscape.

Multimodal performance achieves 84.2% on MMMU and 81.1% on VideoMMMU, demonstrating state-of-the-art capabilities in both visual and scientific reasoning. These scores position GPT-5 as the most capable general-purpose AI system available.

Yet benchmark supremacy couldn’t overcome user preference for GPT-4o’s conversational style. The disconnect between technical metrics and user satisfaction became a central theme of the launch, forcing the industry to reconsider how it measures success.

Lessons for the AI Industry

The GPT-5 launch debacle offers crucial insights for AI development. First, user attachment to AI personalities runs deeper than many expected. People don’t just use these tools—they form relationships with them. Disrupting those relationships, even for technical improvements, risks backlash.

Second, forced migrations without transition periods violate user trust. The software industry learned this lesson decades ago, but AI companies needed their painful reminder. Users need time to adapt, options to revert, and clear communication about changes.

Third, technical superiority doesn’t automatically translate to market success. GPT-5’s benchmark dominance meant little to users who preferred their familiar assistant. The human element—personality, consistency, predictability—matters as much as raw capability.

The Path Forward

OpenAI’s stumble created opportunities for competitors. Google’s steady approach suddenly looked wise compared to OpenAI’s disruptive launch. Anthropic’s Claude maintained its focus on specific use cases rather than claiming universal superiority. Microsoft leveraged its distribution channels to offer GPT-5 without forcing adoption.

The incident also sparked essential conversations about AI development priorities. Should companies optimize for benchmarks or user experience? How can they balance innovation with stability? What obligations do they have to users who’ve integrated their tools into daily life?

As AI becomes increasingly central to how we work and create, these questions grow more urgent. The GPT-5 launch showed that users won’t passively accept whatever Silicon Valley delivers—they’ll fight for the tools they love, even if those tools are technically inferior.

Key Takeaways:

- OpenAI’s GPT-5 launch triggered massive user backlash despite superior technical capabilities, forcing CEO Sam Altman to apologize and restore access to GPT-4o within 72 hours.

- The controversy revealed a deep attachment to AI personalities and workflows among users, showing that technical improvements alone don’t guarantee adoption success.

- Prediction markets underwent a significant shift during the launch event, with OpenAI’s confidence dropping from 80% to 18%, while Google surged to 77% market leadership.

- Enterprise adoption proceeded smoothly through Microsoft’s ecosystem integration, in contrast to the consumer rejection of the forced migration.

- The incident established new precedents for AI deployment, highlighting the importance of transition periods, user choice, and respect for established workflows.

- Safety improvements, including a 45% reduction in errors and “safe completions” for sensitive queries, demonstrated genuine technical progress despite user experience failures.